Real Face or AI-Generated Fake?

I created an app to help you tell the difference.

We have seen great advancement in Artificial Intelligence in the past couple of years. It has applications in industries like healthcare, fashion, education, and agriculture, and is predicted to be one of the next big digital disruptions.

As Andrew Ng puts it:

Just as electricity transformed almost everything 100 years ago, today I actually have a hard time thinking of an industry that I don’t think AI will transform in the next several years.

However, AI is a tool that can be misused. It is a powerful tool that can be used to disrupt everyday lives if it falls into the hands of the wrong people.

In this article, I will cover an application of AI that has been used to trick people and spread misinformation.

Applications of AI trickery

In the past few years, an application of AI called “deepfake” has taken the Internet by storm. Deepfakes are highly convincing fake videos created by neural networks.

Faces of celebrities and politicians were pasted onto a different body, and fake videos were created.

In the year 2020, deepfakes went mainstream.

They have been widely used in ads and tv shows, and have been increasingly used to spread false information.

In 2018, a video was created depicting Barack Obama calling Donald Trump an expletive. A few minutes into the video, it was revealed that Obama never really uttered those words. Rather, they were said by “Get Out” director and writer Jordan Peele.

His voice had been digitally inserted into a video of the formal president to create a deepfake. The video demonstrates just how powerful deepfakes can be, and how they can be used to spread misinformation.

Deep learning models can also be used to create fake images.

AI models are capable of generating fake faces that look almost identical to real people. They have become really good at it over the years, and it is almost impossible to distinguish between a real face and an AI generated fake.

Can you tell which one of these faces is real?

The face on the right is real. The photo on the left was generated by an AI application.

The technology behind fake images

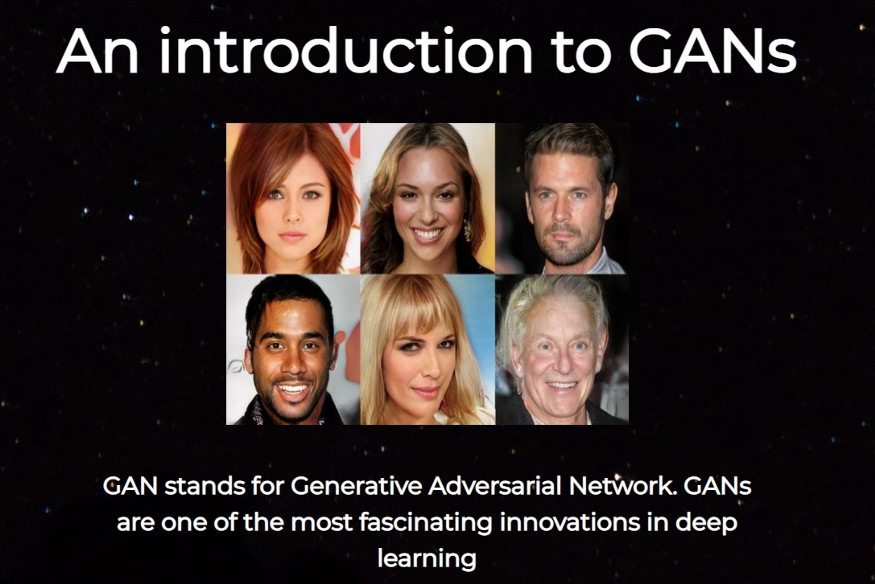

Deepfakes and fake images are generated by a class of machine learning models called “GANs.”

GANs stand for Generative Adversarial Networks, and were designed by researcher Ian Goodfellow and his colleagues in the year 2014.

The Idea

It all started when AI researcher Ian Goodfellow came up with an idea. He wanted to create a deep learning model that was capable of generating fake data.

His idea involved using two neural networks, and having them compete against each other.

The first network would be used to generate fake images based on an existing dataset. The second network would learn to identify the difference between real and fake images.

The first network is called the generator, and the second is called the discriminator.

The generator’s job was to trick the discriminator into believing the images created were real. This way, as the discriminator got better at identifying fake images, the generator would start producing more realistic images to trick the discriminator.

This generator and discriminator are both adversaries. As one network got better, so did the other.

Ian came up with this idea when he was at a bar with some friends, who told him it wasn’t possible. They didn’t think it would be possible to train a second neural network in the inner loop of the first network, and they assumed the network wouldn’t converge.

When he went home that night, Ian decided that it was worth giving the idea a shot. He wrote some code and tested his software. And it worked the first time he tried it!

What he invented is now called a GAN, and is one of the most fascinating innovations in the field of deep learning. In fact, Yann LeCun, Facebook’s chief AI scientist, has called GANs “the coolest idea in deep learning in the last 20 years.”

Real vs GAN-generated images

As I mentioned above, GAN-generated images can be very convincing. Neural networks have gotten alarmingly good at creating realistic human faces.

This can be dangerous, since GANs can be used to create fake dating profiles, catfish people, and spread fake information.

It is very important for us to be able to distinguish between fake and real.

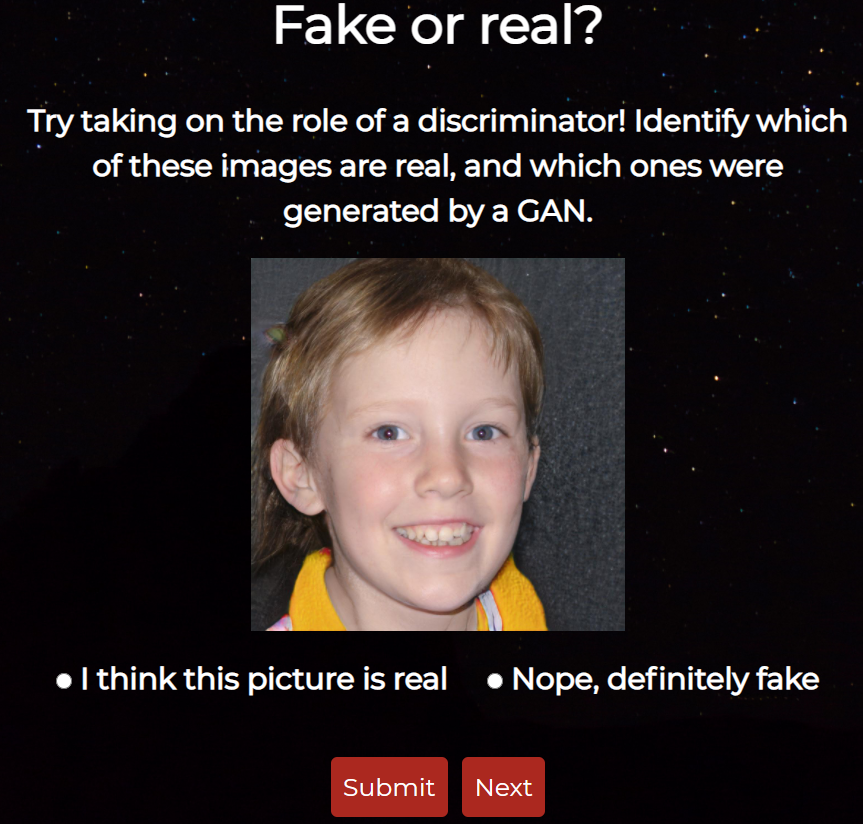

I created an application to quiz you on whether you can tell a real person’s face from a fake one.

You can take my quiz, and try identifying which one of the images are real faces, and which ones are GAN-generated.

I used the 1-million fake face dataset to get GAN-generated images for this project, and Kaggle’s UTKFace dataset for the real images.

My quiz looks like this:

All you need to do is guess whether the picture you see on the page is fake or real. As you keep practicing, you will get better at identifying computer-generated images.

In essence, what you’ll be doing in my quiz is exactly the same thing a discriminator does.

The discriminator in a GAN learns the difference between fake and real images. Over time, it gets better at identifying the fake images. That’s exactly what you’ll be doing when taking the quiz.

You can access my quiz here. This quiz application was inspired by a site called thispersondoesnotexist.com, which you can check out. Every time you refresh the site, it shows you a fake GAN-generated face.

Conclusion

This article was an introduction to the technology behind AI generated images and deepfakes. If you want to learn more about the topic, I suggest the following resources:

- A virtual expert panel on GANs for Good

- Introduction to Generative Adversarial Networks

- Ian Goodfellow: Generative Adversarial Networks tutorial

- GANs Specialization on Coursera

- A Podcast with Ian Goodfellow and Lex Fridman on GANs

If you are fascinated by the idea of GANs and want to play around with fake images, then feel free to take my quiz for as long as you want.

If you have come this far, thanks for reading! I hope you enjoyed my article.

Artificial intelligence is about replacing human decision making with more sophisticated technologies — Falguni Desai